Java REST API Benchmark: Tomcat vs Jetty vs Grizzly vs Undertow

This is early 2016 and over and over again the question arises as to what Java web container to use, especially with the rise of micro-services where containers are being embedded into the application.

Recently, we have been facing the very same question. Should we go with:

- Jetty, well known for its performance, speed and stability?

- Grizzly, which is embedded by default into Jersey?

- Tomcat, the de-facto standard web container?

- Undertow, the new kid in the block, prised for it’s simplicity, modularity and performance?

Our use case is mainly about delivering Java REST APIs using JAX-RS.

Since we were already using Spring, we were also looking into leverage frameworks such as Spring Boot.

Spring boot out of the box supports Tomcat, Jetty and Undertow.

This post discusses about which web container to use when it comes to delivering fast, reliable and highly available JAX-RS REST API.

For this article, Jersey is being used as the implementation.

We are comparing 4 of the most popular containers:

- Tomcat(8.0.30),

- Jetty(9.2.14),

- Grizzly(2.22.1) and

- Undertow(1.3.10.FINAL).

The implemented API is returning a very simple constant Json response …. no extra processing involved.

public class ApiResource {

public static final String RESPONSE = "{\"greeting\":\"Hello World!\"}";

@GET

public Response test() {

return ok(RESPONSE).build();

}

}

The code has been kept deliberately very simple. The very same API code is executed on all containers.

For more detail about the code, please look at the link in the resource section.

We ran the load test using ApacheBench with concurrency level=1, 4, 16, 64 and 128

the results in term of fastest or slowest container does not change no matter the concurrency level

so, here, I am publishing only concurrent users=1 and concurrent users=128

System Specification

This benchmark has been executed on my laptop:

CPU:

model name : Intel(R) Core(TM) i7-3537U CPU @ 2.00GHz

model name : Intel(R) Core(TM) i7-3537U CPU @ 2.00GHz

model name : Intel(R) Core(TM) i7-3537U CPU @ 2.00GHz

model name : Intel(R) Core(TM) i7-3537U CPU @ 2.00GHz

RAM:

total used free shared buffers cached

Mem: 7.7G 4.9G 2.7G 399M 206M 2.3G

-/+ buffers/cache: 2.5G 5.2G

Swap: 7.9G 0B 7.9G

Java version:

java version "1.8.0_66"

Java(TM) SE Runtime Environment (build 1.8.0_66-b17)

Java HotSpot(TM) 64-Bit Server VM (build 25.66-b17, mixed mode)

OS:

Linux arcad-idea 3.16.0-57-generic #77~14.04.1-Ubuntu SMP Thu Dec 17 23:20:00 UTC 2015 x86_64 x86_64 x86_64 GNU/Linux

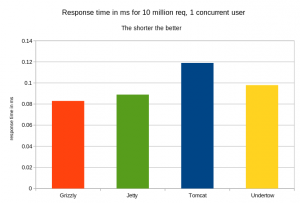

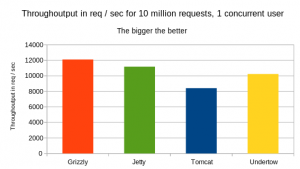

Concurrent number of Users = 1

from the 2 graphs above, Grizzly is leading the benchmark followed by Jetty, followed by Undertow. Tomcat remains the last in this benchmark

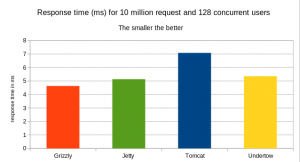

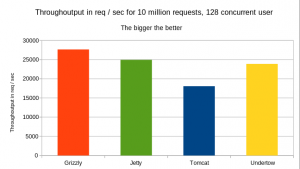

Concurrent number of Users = 128

Note that Grizzly is still leading here and that concurrency level =128 did not change anything to which server is best or worst.

Note that we have also tested for concurrency level =4, 16 and 64 and the final result is pretty much the same

Conclusion

For this benchmark, a very simple Jersey REST API implementation is being used.

Grizzly seems to give us the very best through-output and response time no matter the concurrency level.

in this test, I have been using the default web container settings.

And as we all know, no one put a container into production with its default settings.

in the next blog post, I will change the server configuration and rerun the very same tests

Resources

The source code is available on GitHub